AI-Computing Optical Networks: Scenarios and Trends

The AI-Computing Optical Network provides optical connectivity within, between, and to AI-Computing Data Centers. It is a key technology for enabling mesh interconnections to support high-speed direct connections between these centers. This paper explores the main application scenarios and future development trends of the AI-Computing Optical Network, aiming to provide a reference for research and practice in relevant fields.

Main Application Scenarios of AI-Computing Optical Networks

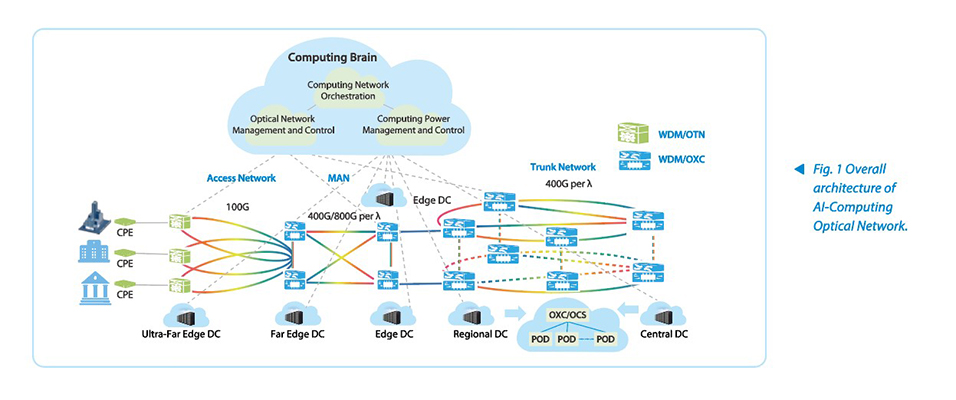

Driven by the demands of AI-Computing, the development and deployment of the AI-Computing Optical Network will accelerate. Optical networks are present in various deployment scenarios of AI-Computing Data Centers. Fig. 1 illustrates the overall architecture of an AI-Computing Optical Network.

As shown in Fig. 1, central DCs, regional DCs, edge DCs, and far edge DCs are deployed from the backbone network to the MAN. These DCs are connected to the AI-Computing Optical Networks via the WDM/OXC NE, with each DC directly connected to the optical network and assigned different bandwidths based on service requirements. Regional and central DCs have a large scale. To enable the scale-up of AI-Computing Data Centers, multiple PODs can be interconnected through the OXC to build a GPU pool with more than 10,000 cards. The access network is deployed with WDM/OTN devices and ultra-far edge DC devices to facilitate enterprise access to computing-related services, such as federated learning, enterprise training data processed without persistent storage, and services combining inference and training.

Intelligent management and control of AI-Computing Optical Networks is key to efficient computing power scheduling. By utilizing a unified computing network orchestration system, an all-optical network management system, and a computing power management platform (computing network brain), efficient resource scheduling and computing power provisioning can be achieved for end-to-end integrated computing and network services.

Intelligent management and control of AI-Computing Optical Networks is key to efficient computing power scheduling. By utilizing a unified computing network orchestration system, an all-optical network management system, and a computing power management platform (computing network brain), efficient resource scheduling and computing power provisioning can be achieved for end-to-end integrated computing and network services.

High throughput in an AI-Computing Optical Network also requires end-to-end network coordination. Information about packet loss caused by changes in network delay or suboptimal conditions needs to be promptly synchronized with the GPU NIC driver configuration. The parameters of the RDMA NIC are dynamically adjusted according to network latency, and RoCE network buffers are modified to adapt to changes in round trip time (RTT), ensuring lossless transmission of AI-Computing Optical Networks and efficient use of computing resources.

In general, AI-Computing Data Centers, driven by both hardware acceleration and technological innovation, are developing rapidly. They will gradually evolve to virtualize the diverse GPU cards, currently dispersed across multiple locations, into a single "Super AI-Computing Data Center," similar to general-purpose computing services. This Super AI-Computing Data Center is used for super-large-scale joint training and inference services and can be virtualized into a lot of intelligent computing services that can be leased to enterprises and even individual users, allowing everyone to access these services as easily as as tap water.

At present, an AI-Computing Optical Network mainly includes scenarios such as DCI, DCN, and DCA.

AI-Computing Data Center Interconnection (DCI)

As the core transport nodes for computing power, the efficient interconnection of AI-Computing Data Centers is crucial for the coordinated scheduling of computing power resources. The AI-Computing Optical Network uses all-optical DCI technology (WDM/OXC) to establish all-optical connections between data centers with a single-fiber capacity of 100 Tbps. This high-speed interconnection coordinates and dispatches computing power to meet increasing demand, effectively allevating pressure on individual single data centers and supporting customers' local access to computing resources.

Specifically, core and regional AI-Computing Data Centers are generally located in areas with intensive computing demands and abundant energy resources. They involve the construction of ultra-large AI-Computing Data Centers or clusters of such centers, enabling interconnection among centers with over 10,000 GPU cards. With a 4:1 convergence ratio, the bandwidth demand can reach thousands of Tbps. Edge AI-Computing Data Centers, interconnected with core and regional centers, are located in core city-level equipment rooms, key equipment rooms in developed districts and counties, or high-traffic integrated business areas. They deploy 1000+ GPU cards, 100+ GPU cards, or even fewer to the customer edge, enabling 1 ms access to computing resources.

AI-Computing Data Center Network (DCN)

In the AI-Computing Data Center, the network is converged with a 1:1 ratio, and network performance directly affects the utilization efficiency of computing resources. The AI-Computing Optical Network uses the all-optical cross-connect scheduling technology (WDM/OXC) to optimize the performance and reliability of hybrid optical–electrical networking. This technology improves the utilization efficiency of computing power in data centers, supports the efficient execution of large-scale parallel computing tasks, reduces bit power consumption, is insensitive to port rates, and extends the network’s evolution period. It meets the challenges of zero packet loss, low latency, and high-burst traffic in the internal networks of AI-Computing Data Centers.

Specifically, the OXC and WDM technologies are used in each POD network inside the AI-Computing Data Center, enabling a per-port transmission capacity of 100 Tbps. An interconnection capacity of 4000 Tbps for a 10,000-GPU pool can be achieved with 40 pairs of optical fibers, greatly reducing the OPEX and CAPEX of the network. The rate of the GPU NIC in the internal network of the AI-Computing Data Center evolves every two to three years. However, OXC is not sensitive to the rate, and can support the smooth evolution and hybrid networking of the AI-Computing Data Center Network from 200GE, 400GE, and 800GE to 1.6TE.

Data Center Access (DCA)

The computing power access network is the “first hop” for users to access computing power resources, and its performance directly impacts user experience. Through access technology of the government and enterprise private line, the AI-Computing Optical Network supports high-quality, flexible access at rates ranging from 10 Mbps to 100 Gbps for customers such as parks, enterprises, and governments. It enables 1 ms access to edge AI-Computing Data Centers, 5 ms access to regional AI-Computing Data Centers, and 20 ms access to core AI-Computing Data Centers.

Trends in AI-Computing Optical Networks

The development of AI-Computing Optical Networks faces many technical difficulties and requirements. Ultra-high-rate transmission, All-Optical Cross-Connect, optical network-aware computing-power services, AI intelligence, low-carbon and secure control, and new optical fibers are the development trends for AI-Computing Optical Networks in the next 10 years.

Continuous Breakthrough in Ultra-High-Speed Optical Transport Technology

With the continuous growth of traffic, optical networks are evolving to higher rates. At present, 400G has become the mainstream for backbone network construction, and the T-bit era, including 800G, 1.2T, and even 1.6T, is accelerating. Optical DSP chip technology has reached its extreme limits. To achieve a bandwidth of 1.6 Tbps, SerDes rates need to be boosted to approximately 450 GBd.

As the single-wavelength rate increases, the spectrum bandwidth also grows. However, the single-fiber capacity doesn’t increase linearly. Therefore, expanding to additional bands, such as C+L and S+C+L, will be key to increasing single-fiber capacity and meeting the ultra-large bandwidth requirements of interconnections between AI-Computing Data Centers.

In terms of modulation, single-wavelength rates, subcarriers, and multi-lane technologies are advancing in parallel, and optical-electrical integration, such as co-packaged optics (CPO), is used to improve efficiency and reduce power consumption.

Ubiquitous All-Optical Networks with Service Awareness and End-Network Coordination

In the medium to long term, all-optical networks will become the core infrastructure of the computing power era. By optimizing DCA, DCI, and DCN, all-optical networks can enable more efficient resource scheduling. The all-optical network is a key technology that supports 1 ms access to AI-Computing Data Centers, 5-20 ms interconnection between AI-Computing Data Centers, and microsecond-level interconnection among PODs in AI-Computing Data Center Networks.

Key technologies for all-optical networks include the evolution and efficiency enhancement of all-optical cross-connects and coherent tunable optical modules, the full digitalization of the optical layer, and advancements in control plane technology. Routing in the AI-Computing Data Center will be synchronized with ROADM/OXC-based switching, realizing all-optical routing and one-time provisioning of all-optical services. Comprehensive digitalization of optical fibers and optical components will enable the digitization of analog signals and real-time simulation of nonlinear data. With enhanced control plane technology, optical layer services will achieve provisioning efficiency comparable to that of the electrical/IP layer, while optical layer recovery will attain performance comparable to that of protection switching, with service interruption times of less than 50 ms.

Service Ethernet interfaces in all-optical networks need to support service awareness, enabling the perception of customer service types, optical fiber network changes, and latency variations. Based on service types, the interfaces ensure SLA fulfillment, and perform optimal path selection. They determine path latency according to optical fiber changes and notify the computing power orchestration system, which then notifies RoCE switches to adjust interface buffers based on round-trip time (RTT). For service interfaces supporting buffering and priority flow control (PFC), automatic parameter adjustment and buffer size matching can be performed to achieve 100% throughput.

All-optical networks can enable one-hop access to computing power by deploying AI compute acceleration cards at the access, aggregation, and core layers. They provide customer services with proximate access to AI-Computing Data Centers, support 1 ms access, and empower OTN private line customers with one-hop access, secure isolation, and high reliability.

Intelligent Evolution

The in-depth integration of AI and optical networks will become a key trend in future development. The optical network itself has a strong foundation in management and control systems, and is well-suited for AI integration. The bidirectional empowerment of AI and optical networks is key to achieving network intelligence.

Through AI technology, real-time simulation and planning of optical networks can be achieved, empowering intelligent network O&M. By integrating GPUs into network equipment, online parameters of optical layer devices and optical fibers are collected for big data analysis, enabling the training of proprietary models. This allows for one-time activation of the optical layer network. After optimizing the efficiency of the control plane, optical layer services recovered by WSON can achieve performance comparable to that of protection switching.

AI-driven O&M and optimization enhance O&M efficiency, rationalize network resource utilization, improve network energy efficiency, and reduce overall network CAPEX and OPEX.

Green, Low-Carbon & Controllable Development

Driven by policy and market focus on sustainable development, green and low-carbon will become core competencies of the computing power industry. In the future, enterprises will need to meet energy-saving and carbon reduction goals through technological innovation (e.g., realizing data collection in data-intensive regions, building super-large AI-Computing Data Centers in energy-rich regions for training, and enabling high-speed WDM/OTN interconnection between data-intensive and energy-rich regions) and management optimization, promoting the transformation of computing power development from a "scale-and-speed model" to a "quality-and-efficiency model".

Leveraging the high bandwidth and low latency of AI-Computing Optical Network, along with regional electricity price differences, algorithms can be employed to find the optimal balance point. This aims to achieve coordinated optimization of power resources, optical network bandwidth, and computing power energy efficiency between energy-rich and data-intensive regions, promoting green and low-carbon development.

From a security perspective, designating AI-Computing Data Centers in energy-rich regions as backup and disaster recovery centers for data-intensive regions enhances their security and controllability. This necessitates relying on optical networks for high-bandwidth interconnection between AI-Computing Data Centers.

Exploration of New Fiber Technologies

New optical fiber technologies (such as multi-core fiber and hollow-core fiber) are currently in the pilot construction and transmission verification phase. These technologies are expected to further enhance the transmission capacity and performance of optical networks but still face challenges in large-scale manufacturing and adaptability testing in existing networks.

Hollow-core fibers can offer superior spectral efficiency, extending the spectrum to the S-band to achieve S+C+L single-fiber spectral capacity, while enabling a 35% reduction in fiber latency. This is a key technology for the future development of AI-Computing Data Centers, capable of significantly boosting bandwidth and reducing latency.

As a critical infrastructure in the computing power era, the AI-Computing Optical Network is driving the efficient utilization of computing resources and the high-quality development of the digital economy. In the future, with breakthroughs in ultra-high-speed optical transport technology, widespread deployment of all-optical networks, and accelerated intelligent evolution, the AI-Computing Optical Network will play an even more crucial role in the compute era. Concurrently, the development of green initiatives and new optical fiber technologies will provide strong support for the sustainable development of the AI-Computing Optical Network.