Core Network O&M Agent Boosts Efficiency for L4 Autonomy

With the rapid deployment of 5G networks and increasingly diverse service scenarios, the operational complexity of the core network has grown sharply. Traditional O&M models, which rely on manual expertise, struggle to meet demands such as dynamic adjustments of large-scale networks, fault prediction, and self-healing. In this context, autonomous networks powered by large language models (LLMs) and intelligent agents have emerged as a key solution to enhance intelligent O&M for 5G core networks.

The Use of Intelligent Agents in O&M

Agent technology has been widely applied in telecom intelligent O&M. In the core network domain, typical application scenarios include fault management and complaint-handling agents.

- Fault management agent: The agent perceives real-time alarm information or processes fault tickets dispatched by external pipelines, detecting fault anomalies, analyzing root causes, providing resolution feedback, and offering handling suggestions. Fault tickets can be automatically responded to. Additionally, leveraging models trained on historical fault-handling experiences and combining them with real-time data monitoring and analysis, the agent can predict potential faults, issue early warnings, and help reduce the probability of fault occurrences.

- Complaint-handling agent: As user demands for superior experience continue to grow, complaints in networks have become increasingly diverse, involving potential end-to-end issues from terminals to wireless, bearer, and core networks. The lengthy resolution process remains a major challenge. The complaint-handling agent can analyze complaint tickets, including user subscription information, network configuration details, and signaling interaction data. Leveraging large model technology, the system identifies problems, pinpoints root causes, provides suggestions, and automatically closes tickets, enabling fully automated complaint handling, significantly reducing fault resolution time, and improving efficiency.

Architecture and Key Technologies of ZTE’s Core Network O&M Agent

System Architecture

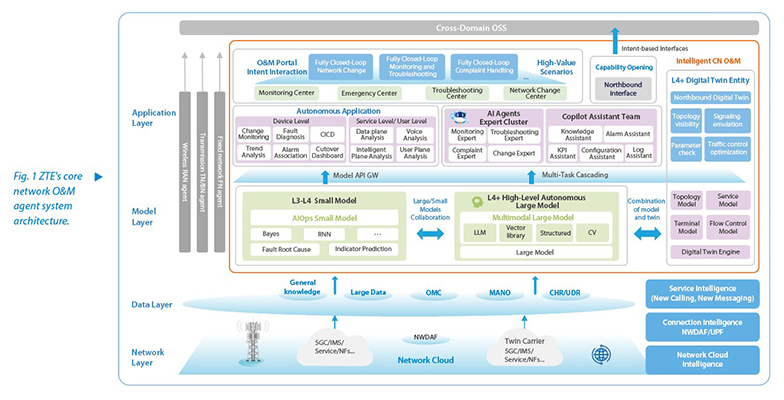

The system architecture of ZTE's core network O&M agent encompasses five layers: network, data, model, application, and digital twin (see Fig. 1).

- Network layer: Serves as the foundation of network operations and includes the existing atomic network elements within the core network.

- Data layer: Provides high-quality corpus data for decision-making in the upper layers.

- Model layer: Uses large and small models as intelligent engines to create a composable, orchestrated, and self-iterating intelligence foundation.

- Application layer: Orchestrates various application capabilities to meet O&M needs of diverse scenarios.

- Digital twin: Constructs a "business model + twin application” architecture to facilitate business innovation and network optimization.

Key Technologies

To realize these capabilities, the core network O&M agents employ several key technologies:

- Graph-RAG: Graph-based retrieval-augmented generation (Graph-RAG) integrates knowledge graphs with RAG to enhance LLM reasoning, overcoming traditional RAG’s limitations in complex queries and multi-hop reasoning.

- AI Agent: Multi-agent collaboration enables multiple agents to communicate and cooperate in a shared environment to accomplish common goals. Each agent has a certain degree of autonomy and intelligence to perceive, decide, and act based on environmental information. This collaboration allows the entire system to benefit from their complementary strengths, resulting in more efficient and intelligent decision-making. Based on this architecture, specialized agents—such as knowledge experts, fault experts, on-duty experts, and complaint experts—can be created to jointly build an intelligent O&M system.

- MCP: Model context protocol (MCP) standardizes how LLMs or generative AI systems understand, store, and utilize contextual information. It establishes a unified communication interface between AI models and external data sources or tools, covering capabilities such as context window management, multi-turn dialogue maintenance, and dynamic context updates. MCP functions like a USB interface of AI: any compliant AI model or tool can achieve fast "plug-and-play" integration, without the need for separate interface programming or programming language constraints. Compared with early function calling in large models, it significantly improves interaction modes, capability definition, protocol standardization, and ecosystem openness.

- Digital Twin: Digital twin technology uses discrete event simulation algorithms and related techniques to construct a digital twin model of the core network. This model supports O&M through visualization, simulation, predictive analysis, and strategy feedback, enabling qualitative and quantitative analysis at low cost. It facilitates the transition of decision-making from human-led processes to machine-assisted and even machine-autonomous operations, accelerating the evolution toward advanced autonomous intelligent O&M, and ultimately enabling full closed-loop automation.

The development of intelligent agents is shifting O&M models from "automation" to "autonomy," though key challenges in reliability, security, and collaboration remain. With breakthroughs in intelligent agents and digital twins, intelligent O&M is expected to achieve widespread implementation of L4 autonomy within the next five years, laying the foundation for highly autonomous intelligence in the 6G era. Continuous innovation, scenario-driven practice, and sustained R&D, are essential to accelerate this process.